The Feeling You Won't Admit

You used AI to draft a client proposal last week. It was good. Polished. Sounded like you. And you still read it four times before sending because something in your gut didn't trust it.

That feeling — the quiet unease, the compulsive re-checking, the nagging suspicion that the AI made something up or missed something critical — you're not alone in it. Virtually every CEO using AI for real business work has it. Most feel slightly embarrassed about it, like they should be past this by now. Like trusting AI is a sophistication test they're failing.

You're not failing anything. Your instinct is correct.

The AI industry has a transparency problem, and your distrust is the rational response to it. You're making business decisions — decisions that affect clients, revenue, reputation, legal exposure — based on output from systems that cannot explain how they arrived at that output. No audit trail. No reasoning chain. No source citations. Just a confident-sounding result from a process you can't see.

You wouldn't accept this from a person. An employee who handed you a financial analysis but couldn't explain which numbers they used, what assumptions they made, or why they chose one methodology over another? You'd fire them. Not because the analysis was bad — maybe it was great — but because you can't verify it, you can't learn from it, and you can't defend it to a client or a board if it turns out to be wrong.

AI gets a pass on this standard. It shouldn't.

Why Black Boxes Are the Default

There's a reason most AI tools are opaque, and it's not malicious. It's structural.

Large language models are, at a fundamental level, pattern-matching machines operating across billions of parameters. They don't "reason" the way a human does — they generate statistically probable next tokens based on training data. Explaining why a particular output was generated in the way a human could explain their thought process is genuinely hard. The model doesn't have a thought process. It has weights.

But "it's technically hard" isn't an excuse for shipping opaque products to business users making real decisions. The difficulty of the engineering challenge doesn't change the user's need for transparency. It just means the companies building these tools have to work harder.

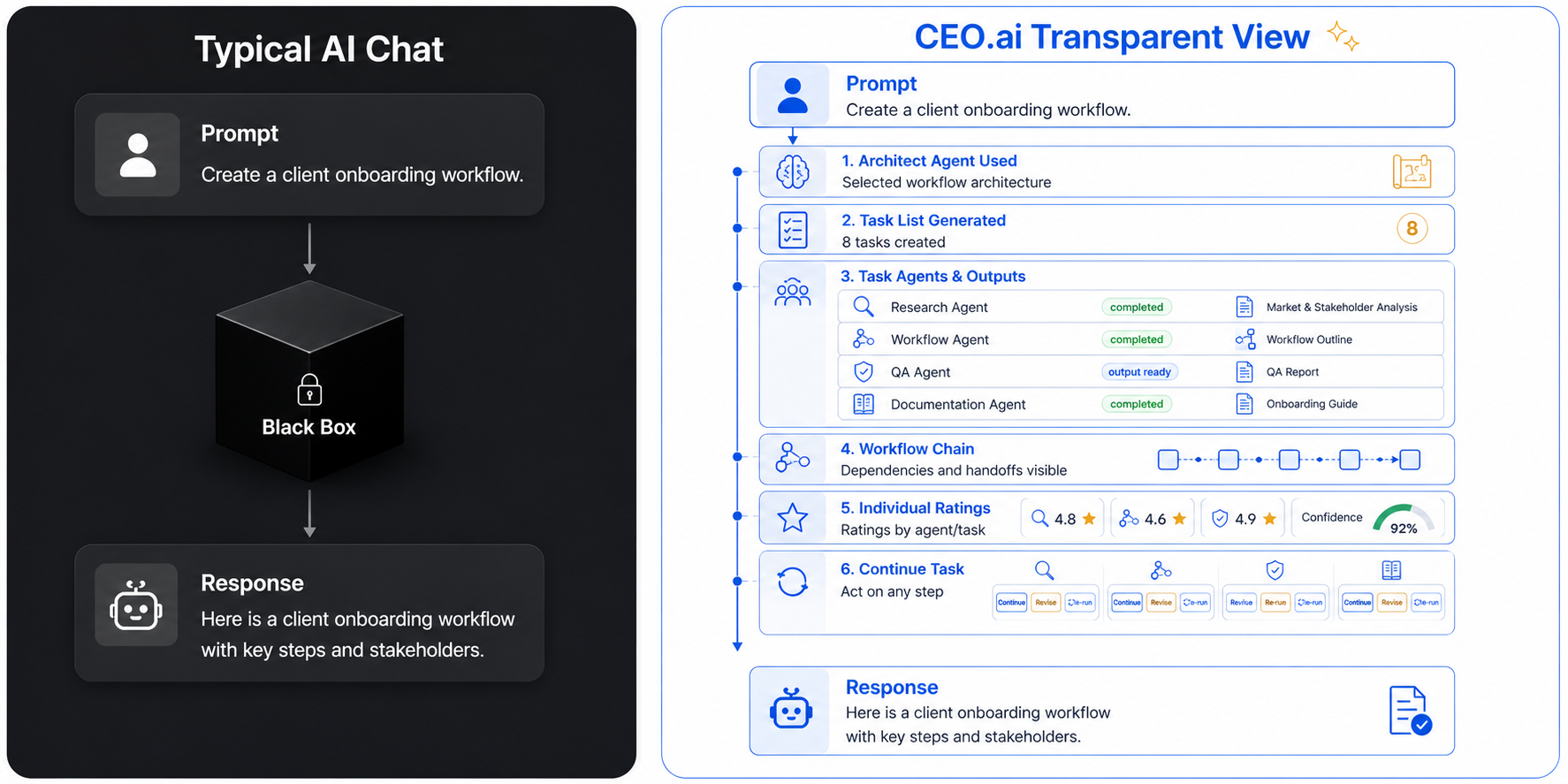

Most don't. Most wrap a model in a chat interface, show you input and output, and call it done. The space between — where the decisions happened, where the data was sourced, where the judgment calls were made — is invisible.

The consequences ripple outward. Because you don't trust the output, you re-check everything. Because you re-check everything, you don't save any time. Because you don't save time, AI feels like a gimmick instead of a force multiplier. And because it feels like a gimmick, you stop using it for anything important — which means AI only touches the tasks you don't care about, which means it never delivers transformative value.

The black box doesn't just erode trust. It erodes ROI. You can't delegate important work to something you can't verify. And if AI only handles unimportant work, why bother?

What Transparent AI Actually Means

"Transparency" has become a marketing word. Every AI company claims it. Almost none deliver it in a way that matters to someone running a business. Here's what transparency actually requires — not in theory, but in the day-to-day experience of a CEO who needs to trust AI output enough to act on it.

"I don't need to understand how the neural network works. I need to know which documents my AI read, what it concluded from each one, and why it recommended Option A over Option B. The same way I'd want an analyst to explain their work."

— Founder of a 35-person financial advisory firm

That quote captures it precisely. Transparent AI doesn't mean opening the model's weights and showing you tensor calculations. It means showing you the work — in language you understand, at the level of detail you need, whenever you want to see it.

Apply the same standard to AI that you'd apply to a new hire. Can it explain what it did and why? Can it cite its sources? Can it walk you through its reasoning when you challenge a conclusion? If a person couldn't do those things, you'd question their competence. AI shouldn't get a lower bar.

The Five Layers of AI Transparency

Real transparency isn't a single feature. It's a stack of five layers, each one answering a different question you need answered before you'll trust AI with consequential work.

Source Visibility — "What did you look at?"

When an agent produces a recommendation, you should be able to see exactly which documents, data sources, conversations, and knowledge base entries it drew from. Not a vague "based on your files." Specific file names. Specific passages. Clickable references you can verify in 10 seconds. If the AI used your Q3 revenue report and your competitor analysis from March, you should see both cited explicitly.

Reasoning Chain — "How did you get from input to output?"

The step-by-step logic connecting the input to the final deliverable. "I found 4 relevant data points. I weighted them based on recency and relevance. I considered two possible approaches. I chose Approach A because it aligns with the pattern in your previous 6 proposals." This is the difference between "here's your answer" and "here's how I arrived at your answer." The second builds trust. The first demands faith.

Confidence Flagging — "How sure am I?"

Not everything AI produces deserves the same level of trust. A draft email based on 200 prior examples of your writing? High confidence. A market analysis for a vertical you've never discussed? Low confidence, and the AI should say so. Transparent AI flags uncertainty instead of burying it under polished prose. This is the critical feature most tools lack — they make everything sound equally authoritative, regardless of how much data they actually had to work with.

Action Logging — "What did each agent do, and when?"

When you have multiple agents working together — a research agent, a writing agent, a review agent — you need an audit trail. Which agent handled which step? What did it produce? What did the next agent do with that output? If something goes wrong in the final deliverable, you need to trace it back to the specific agent and step where the error entered the chain. No trace, no diagnosis. No diagnosis, no improvement.

Decision History — "What have I approved before, and how does this compare?"

Over time, transparent AI builds a record of your decisions — what you approved, what you edited, what you rejected and why. This isn't just memory. It's accountability infrastructure. When an agent makes a judgment call, it should be able to reference: "You approved a similar approach for the Henderson deal in March." Now you're not evaluating blind. You're evaluating against a track record. That's the long-term memory system working as a trust engine, not just a knowledge store.

Trust Is Built, Not Declared

No amount of marketing copy will make you trust AI. Trust is accumulated the same way it is with people: through repeated, verifiable delivery over time.

The first week, you check everything. You open the reasoning chain on every output. You click through the source citations. You challenge the confidence scores. Good. That's the correct behavior. You're calibrating.

By week four, patterns emerge. You notice the AI cites the right documents consistently. Its reasoning chains make sense. When it flags low confidence, it's usually on exactly the topics where you'd expect uncertainty. When it flags high confidence, the outputs are reliably strong. You start checking less — not because you're lazy, but because the evidence supports it.

By month three, you've developed a working relationship built on evidence. You know where the AI is strong and where it's weak. You know which agent teams produce work you can send directly and which ones need a quick scan. You trust it the way you trust a reliable employee — not blindly, but based on a track record you can point to.

- Trust through verification: every output is auditable, every source is cited, every reasoning step is visible

- Trust through honesty: the AI tells you when it's uncertain instead of disguising guesses as facts

- Trust through track record: decision history accumulates, letting you evaluate today's output against a pattern of past performance

- Trust through accountability: when something goes wrong, the audit trail shows exactly what happened and where, so you can fix it — not wonder about it

This is the design philosophy behind CEO.ai's Conversation Manager and Agent Manager. They weren't built to hide complexity from you. They were built to show you exactly what your AI is doing, why it's doing it, and how confident it is — at whatever level of detail you want, whenever you want it.

The name of the platform is CEO.ai. It's built for people who sign their name to the work that goes out the door. If you can't explain something, you can't sign it. And if your AI can't explain itself, you can't sign what it produces. That's not a fear to overcome. That's a standard to enforce.

Transparency doesn't slow you down — it speeds you up. When you can verify AI output in 30 seconds instead of re-doing the work in 30 minutes, you delegate faster, approve faster, and scale faster. The bottleneck was never AI capability. It was your ability to trust the output enough to act on it.

Key Takeaways

- → Your distrust of AI output is rational, not irrational. Most AI tools are black boxes that can't explain their reasoning, cite their sources, or flag their uncertainty. Skepticism is the correct response.

- → Black boxes kill ROI. If you have to re-check everything, AI doesn't save you time. If it doesn't save you time, it only handles trivial work. If it only handles trivial work, it never delivers transformative value.

- → Transparency requires five layers: source visibility, reasoning chains, confidence flagging, action logging, and decision history. Most AI tools have zero of the five.

- → Trust is built, not declared. Through repeated, verifiable delivery — not through marketing claims. Transparent AI gives you the evidence to calibrate your trust over time.

- → Transparency is a speed multiplier. When you can verify in 30 seconds instead of re-doing in 30 minutes, you delegate faster, scale faster, and trust the system more every week.

Work With AI You Can Actually Verify

Tell CEO.ai what you need. Every output comes with full source citations, reasoning chains, and confidence scores — so you can trust the work, not just hope it's right.

Greg Marlin

Founder, CEO.ai

Greg refused to ship AI that couldn't show its work. CEO.ai was built with transparency at the architecture level — not as a compliance checkbox, but because the platform is named after the person who signs the output. If a CEO can't explain something their AI produced, the AI failed. He writes about what trustworthy AI actually looks like in practice.